The Era of Token Factories: How Jensen Huang Is Redefining the AI Production Function and the Trillion-Dollar Compute Market

The Shift in AI Narrative: From Model Training to the Inference Economy

Image source: Financial Times

Image source: Financial Times

In the past two years, the AI industry’s primary competitive focus has been on “training”—the race to build the most powerful large-scale models. The continuous evolution from GPT-4 to multimodal architectures has centered on pushing the limits of model capabilities.

However, at NVIDIA GTC 2026, Jensen Huang made it clear: the core arena for AI is shifting from Training to Inference.

This transformation reflects a new business dynamic: training is a one-time investment, while inference creates ongoing demand.

Specifically:

- Training determines what a model can do

- Inference determines how much revenue a model can generate

As a result, AI is evolving from a technology-driven industry to a demand-driven one, shifting from capital expenditures (CapEx) to recurring revenue.

The Token Factory Model: Redefining Data Centers as Production Units

The statement “data centers are Token factories” is not just marketing—it marks a new industrial paradigm. In the traditional internet era:

- Data centers handled compute and storage

- Revenue came from advertising, subscriptions, or transactions

- No direct link existed between computation and revenue

In the AI era, this logic is fundamentally restructured:

- Every model invocation consumes compute resources

- Each computation generates a Token

- Every Token can be monetized

This shift gives data centers, for the first time, the characteristics of production units.

A complete closed loop emerges: Compute investment → Inference computation → Token generation → Revenue realization

Within this framework, NVIDIA’s “AI Factory” concept redefines AI infrastructure using industrial principles:

- Input layer: Electricity + Data

- Middle layer: GPU compute and orchestration systems

- Output layer: Tokens + AI services

In other words, data centers have evolved from server clusters into “power plants” or “manufacturing facilities.”

The Changing AI Production Function: Direct Monetization of Compute Power

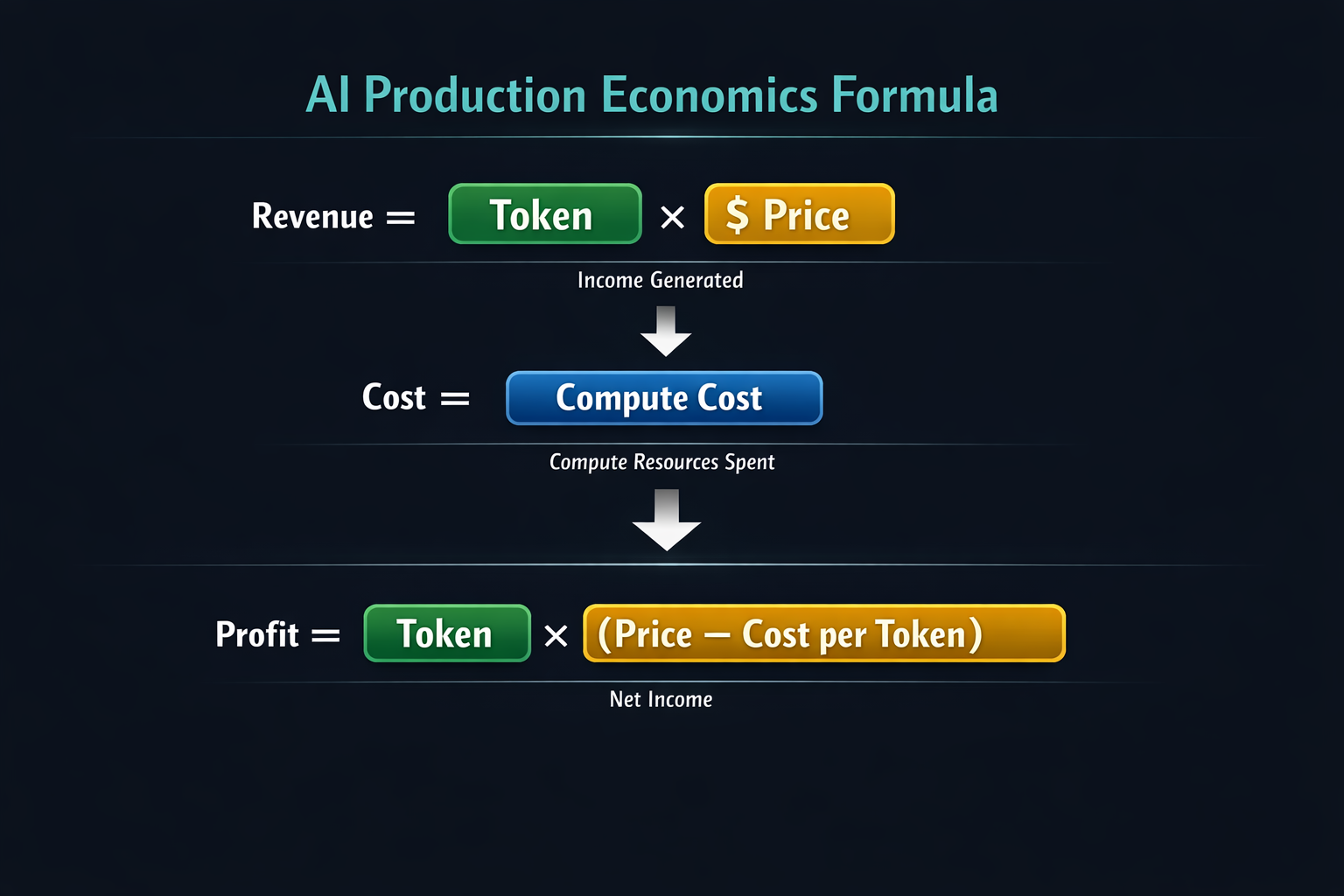

The production function in the AI era can be expressed as:

Revenue = Tokens × Price, Cost = Compute Cost

Thus, profit simplifies to Profit = Tokens × (Price - Cost per Token)

This model drives three key shifts:

- Revenue is directly linked to compute power: more compute means higher Token output and greater revenue

- Cost structure becomes highly concentrated: compute costs dominate expenditures

- Efficiency is the core competitive edge: the critical metric is how many Tokens can be produced per unit of compute

Three Major Drivers of Explosive Inference Demand

The anticipated surge in inference demand stems from three structural changes:

-

Model Capability Upgrades

From simple generation to complex reasoning:

- Multi-step inference

- Long-context processing

- Multimodal integration

Each invocation now incurs significantly higher computational costs.

-

Expansion of Context Length

AI is shifting from short text processing to:

- 100,000 Tokens

- Even million-token contexts

This dramatically increases computational requirements.

-

The Rise of Agents

AI Agents can:

- Execute tasks autonomously

- Continuously invoke models

- Create “infinite inference loops”

As a result, AI’s compute demand shifts from linear to exponential growth.

AI Service Stratification and Token Pricing

At NVIDIA GTC 2026, NVIDIA also implicitly introduced a stratified AI service model, essentially tiered pricing for compute resources.

This system mirrors the layered approach of cloud computing:

- High-end: High-performance GPUs + real-time inference (premium pricing)

- Mid-tier: Standard inference services (mid-range pricing)

- Low-end: Batch or latency-tolerant tasks (discount pricing)

Different scenarios command different Token prices:

- Real-time conversations → High-value Tokens

- Data analysis → Medium-value Tokens

- Offline processing → Low-value Tokens

Ultimately, the decisive factor is: Who can produce Tokens at the lowest cost and sell at the highest price.

The Trillion-Dollar Market: Industry Structure Behind the Forecast

Jensen Huang projects that by 2027, the AI chip and infrastructure market could reach $1 trillion.

The core takeaway is that AI is becoming infrastructure—on par with:

- Power systems

- Cloud computing platforms

- Internet networks

This trend will drive three major changes:

-

Investment Logic Shift

Capital will flow from the application layer back to core infrastructure:

- Data centers

- AI chips

- Energy systems

-

Industry Chain Restructuring

New central players will include:

- Chip manufacturers (e.g., NVIDIA)

- Cloud service providers

- AI platform companies

- Agent ecosystem developers

-

Geopolitical and Energy Factors Intensify

AI is no longer just a software issue—it now involves:

- Competition for electricity resources

- Data center site selection

- National compute strategies

The Agent Economy: The Key Variable in Unlimited Inference Demand

If Tokens are products, Agents are the “demand generators.” In the traditional internet, users created demand; in the AI era:

Agents themselves generate demand. For example:

- Automated trading Agents continuously analyze markets

- Enterprise Agents handle business processes autonomously

- Developer Agents generate and optimize code automatically

This marks the first emergence of non-human demand entities in the AI economy. Thus, the scale of Agents sets the upper limit for inference demand.

This is why AI competition is shifting rapidly toward:

- Agent frameworks

- Automation systems

- AI workflow platforms

Risks and Controversies: Is the Token Economy Overhyped?

While the “Token Factory” narrative is compelling, significant market concerns remain.

-

Cost Pressure

- High GPU costs

- Rising electricity prices

- Massive capital required for data center construction

If Token prices decline, profit margins will be squeezed.

-

Demand Uncertainty

- Will enterprises continue to pay for inference?

- Can Agents truly generate stable demand?

Many AI applications are still experimental.

-

Technology Substitution Risks

- More efficient models may reduce compute demand

- Edge computing could siphon workloads from data centers

- Open-source models may drive down Token prices

These factors could undermine the long-term stability of the Token economy.

Is AI Becoming an “Industrial System”?

Abstracting the current trend reveals a key analogy:

- Electricity → AI’s energy base

- Data → Raw material

- Compute → Production equipment

- Token → Product

- Agent → Automation system

This structure closely mirrors the industrial production systems of the Industrial Revolution. It signals AI’s transition from a software industry to a compute-driven industrial system.

Conclusion

At NVIDIA GTC 2026, Jensen Huang’s “Token Factory” concept is not just a metaphor—it redefines the fundamental logic of the AI industry:

- Tokens are the units of production

- Inference is the production process

- Compute power is the core means of production

With the rise of the Agent economy and surging inference demand, the AI infrastructure market is on track to reach a trillion-dollar scale.

If this trend continues, future business competition will be less about products or user numbers—and more about who can produce Tokens most efficiently.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI Agents in DeFi: Redefining Crypto as We Know It

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects